Up to €200,000 in Funding for startups and SMEs – FFplus call is now open

We’re excited to share that the second FFplus Open Call for Business Experiments is now open! This is a unique opportunity for SMEs and startups across Europe to explore the benefits of high-performance computing (HPC) — with funding of up to €200,000 per project available.

Who can apply?

- SMEs and startups from Europe or Horizon Europe-eligible countries with no prior experience using HPC.

- Consortia made up of an SME and up to 4 supporting partners, which can be all types of organisations that are eligible to participate within the Digital Europe framework programme.

- Proposals can request a maximum of €200,000 in total funding (shared across all partners).

Is your company active in generative A.I.?

- SMEs and startups active in the field of generative A.I. that need to scale up to large or extreme scale computing resources are invited to apply for an Innovation study.

- The call for Innovation Studies is expected to open soon! Register for updates via the link below.

- Details such as the deadline, funding, consortium might be different from the business experiments details listed here.

Eligible costs?

- 100% funding of incurred eligible direct costs in personnel, equipment, travel and material necessary for the completion of the experiment; no indirect costs or overheads will be funded.

- For supporting consortium partners, only engineering activities are eligible for funding.

- HPC time should be acquired via EuroHPC JU access schemes.

- More information can be found in the call document.

Need more info?

- Please contact us through the dedicated contact form.

- All information can be found on the FFplus website.

- Follow the webinar by the FFplus organization:

- 📅 23 June

- 🔗 Webinar details and registration

- Previous FF success stories can be found here:

- All details, documentation, and application guidelines for the Open Call are available here.

- All submissions must be made by 17:00 Brussels local time, August 26th, 2025.

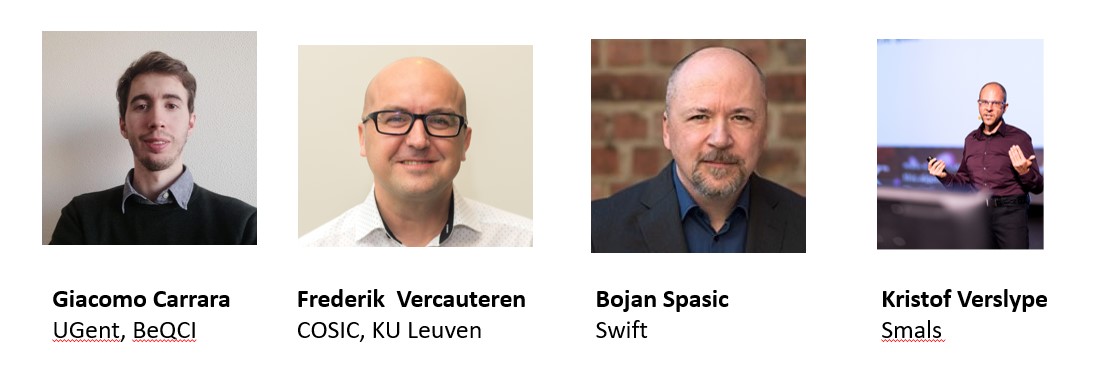

Apply for support of EuroCC Belgium, VSC and Cenaero for your application or any questions

Practical info

Practical info