User Story: Emotionally Intelligent AI - From University Research to Market Innovation with Alfasent

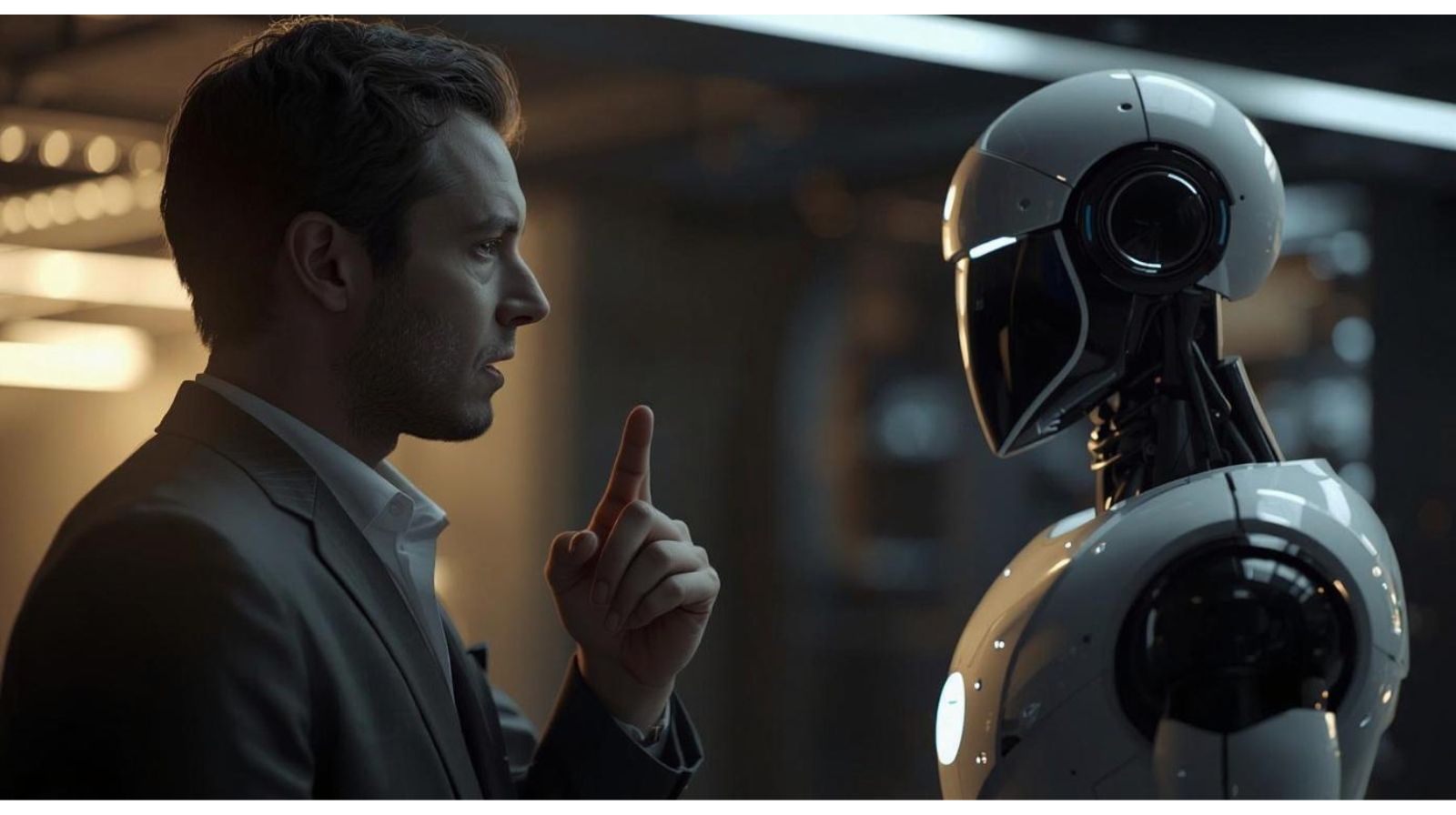

AI is everywhere - from chatbots to customer review analysis. But truly understanding human emotion remains one of its biggest challenges.

In our latest user story, Prof. Veronique Hoste (Ghent University, LT3) explains why language models often struggle with Dutch cultural nuance — and why emotion detection is more than just “positive” or “negative.”

Through the SentEMO project, her team developed aspect-based sentiment and emotion detection that gives companies deeper insights. A review like “The food was fantastic, but the waiter was rude” shouldn’t be labelled neutral — it should reveal what works and what doesn’t.

This research led to the spin-off Alfasent, proving how academic innovation can translate into real business value. Discover the full story!