Simulating the Invisible: How Supercomputing Advances Black Hole Research

Plasma as a Window to Black Hole Physics

Black holes are among the most mysterious objects in the universe. Unlike stars and galaxies, black holes do not emit any radiation, making them effectively "black" and invisible against the backdrop of space. Their location far beyond our current reach makes it impossible to send satellites or spacecraft for close study. Consequently, our understanding of black holes relies on indirect observations.

There are several ways to study black holes. One of them is by examining their environments. The universe is filled with a very low-density plasma, composed of ionised gas where electrons and protons move freely. In certain regions, like around black holes, this gas can accumulate due to the intense gravitational pull. As it orbits the black hole, the gas experiences friction and accelerates, reaching extremely high temperatures. It also interacts with the strong magnetic fields surrounding the black hole. These processes cause the plasma to emit significant radiation, which we can observe from Earth. Although the light originates in the surrounding plasma rather than directly from the black hole, it still allows us to infer the black hole's presence. By studying this plasma, we can gain insights into the dynamics of black holes.

Fabio Bacchini is an Assistant Professor at the Centre for mathematical Plasma Astrophysics (CmPA) of KU Leuven, and he uses numerical simulations to simulate the flow of a plasma around a black hole.

The Computational Challenge of Plasma Simulations

Plasma simulations can be carried out in two fundamentally different ways. One approach models plasma at the macroscopic level, treating it as a fluid rather than as individual particles. This is similar to how we can describe a river: we talk about its temperature, density, and flow velocity without tracking every single water molecule. These quantities are meaningful only as averages; describing each molecule individually is unnecessary if the goal is to understand the river’s large-scale behaviour.

Fluid-based plasma simulations work in the same way. They are well-suited for studying large-scale structures and dynamics, such as how plasma is distributed in space, how it moves, and how it forms enormous structures. However, some phenomena cannot be captured at this level because they occur at the scale of individual particles. For example, the processes by which particles are accelerated to relativistic energies—where they emit radiation—are inherently particle-scale effects.

To study these processes, a second class of simulations is required: particle-based simulations that explicitly describe individual particles one by one. Realistic plasmas contain enormous numbers of particles that must be followed over long times and large spatial scales, making such calculations extremely demanding. These simulations can capture physics that fluid models cannot, but they entail immense computational resources.

Fabio Bacchini: “Both approaches are essential and complementary, but particle-based simulations are only feasible with access to supercomputers. In fact, much of modern black-hole and astrophysical plasma research relies on high-performance computing, and many of the major advances of the past 10 to 20 years would not have been possible without it. Supercomputing is therefore not optional in this field—it is fundamental.”

Bringing Strong Gravity and Orbiting Motion into Particle Simulations

Fabio Bacchini and his team developed a novel algorithm implemented in a Particle-in-Cell (PIC) code: “PIC is a numerical method for modelling plasmas at the particle scale; in this case, because the plasma orbits a black hole, we needed to include strong gravity from the black hole itself, as well as the orbiting motion, which effectively imposes shear forces on the plasma. Gravity and shearing fundamentally influence the plasma dynamics and drive a sustained turbulence state. This turbulence is thought to be the fundamental ingredient that produces radiation: it dissipates magnetic and bulk-motion energy from large scales. It cascades it down to single-particle scales, where it can be transferred to particles, thereby accelerating them to very high energies. Our new algorithm captures this process but requires HPC resources to produce results.”

What the Simulations Reveal

Recent simulations of plasma around black holes have provided significant insights into the bursts of highly energetic radiation, referred to as flares, observed from black-hole environments. Unlike the continuous light emitted by stars, these flares are intense and occur over short periods. The mechanism behind this radiation burst involves converting magnetic energy stored in the strong magnetic fields surrounding the black hole into kinetic energy.

Through extensive numerical simulations over the past 6 to 7 years, researchers found that interactions between magnetic fields and plasma can lead to magnetic reconnection and turbulence. These processes allow for the depletion of magnetic energy, accelerating plasma to relativistic speeds, which subsequently emits powerful bursts of radiation. This understanding reshapes our knowledge of how energetic phenomena in the vicinity of black holes are produced.

Scaling Science through High-End Computing Infrastructure

Fabio Bacchini emphasises the need to use VSC's Tier-1 facilities, such as Hortense, due to the computational intensity of simulating black-hole plasmas, which require 2-3 million CPU hours per simulation. Exploring large parameter spaces demands numerous simulations, making efficient resource allocation essential.

Fabio Bacchini: “Given the novel method we developed, we could, in principle, run simulations of black-hole plasmas. However, Tier-2 facilities are not enough for this task. The simulations are very computationally intensive, with potential costs of 5-10 million CPU hours per simulation. Therefore, we also utilise VSC's Tier-1 facilities like Hortense.’

From National to European Supercomputing

Fabio and his team also scaled up from using local Tier-1 resources to EuroHPC JU systems, such as LUMI [1] , Karolina [2] , and Meluxina [3]. Over the last 4-5 years, they have obtained and used approximately 100 million CPU-hours on VSC clusters and on LUMI allocations.

By expanding resources across borders, they can run a wide range of simulations, routinely using up to 16,000 processors in parallel, obtaining results within a total time of weeks or months.

Fabio Bacchini: “The VSC resources we have in Belgium, like Hortense, are already exceptional, and serve as an excellent foundation for most of my group's work. However, when it comes to accessing large-scale infrastructure like Finland’s supercomputer LUMI, it significantly enhances our capabilities. Utilising LUMI allows us to scale up our efforts by a factor of 10. For instance, with LUMI, I can study the evolution of plasma around a black hole across extensive regions of space, enabling me to model this system over much longer timescales and larger scales than previously possible.”

Fabio: “It is important to study phenomena involving plasmas and black holes over extremely long time scales. For example, colleagues of mine recently conducted the largest simulation to date of a black hole surrounded by plasma. In this simulation, he was able to extend both the scale and duration to a point where the plasma around the black hole began to fragment into microstructures. These microstructures then interacted with one another in ways that had not been previously observed. Such insights would not have been possible on smaller infrastructures.”

Preparing Astrophysics for the GPU Era

The transition from CPU to GPU computing in astrophysics offers significant increases in computational power, with performance improvements that can reach a factor of 10 or more. This leap not only accelerates the processing of complex simulations but also opens the door for ground-breaking discoveries.

However, many researchers face challenges in adapting their code for GPU usage, as they often lack a background in computational sciences and must invest significant time and resources in programming.

To address the challenges of adapting astrophysics codes for GPU computing, collaboration with various organisations and initiatives in Europe can be helpful.

Fabio: “I am actively involved in the EuroHPC Centre of Excellence, SPACE [4], which aims to enhance several longstanding computer codes and astrophysics simulations for exascale computing that is expected to emerge soon. The European initiative COST Action [5] EXPAND [6] fosters collaboration among numerous universities, research institutes, and computing centres, creating a robust interdisciplinary network. It essentially provides a substantial basis to advance science in a very practical way.”

Fabio clarifies: “In my own research group, we are making strides in upscaling our codes to run efficiently on GPUs. Looking ahead to 2026, it’s becoming increasingly clear that this investment is essential to advancing our astrophysics work. The transition is not just beneficial; it is crucial for pushing the boundaries of what we can achieve in our field.”

HPC Unlocking New Frontiers in Black Hole Research

Fabio Bacchini: “Exploring large parameter spaces requires numerous simulations and significant resource allocation, but it is essential for understanding black hole physics. Our approach captures these processes from first principles, enhancing our insights into black hole observations. High-performance computing (HPC) resources are vital to this work, which has led to groundbreaking publications in prestigious journals like The Astrophysical Journal Letters and Physical Review Letters. Our research has deepened our understanding of plasma behaviour around black holes and opened new research pathways.”

[1] a pre-exascale supercomputer located in Kajaani, Finland - More info

[2] a petascale EuroHPC supercomputer located in Ostrava, Czechia - More info

[3] a petascale EuroHPC supercomputer located in Bissen, Luxembourg - More info

[5] https://www.cost.eu/cost-actions/what-are-cost-actions/

[6] https://www.cost.eu/actions/CA24149/

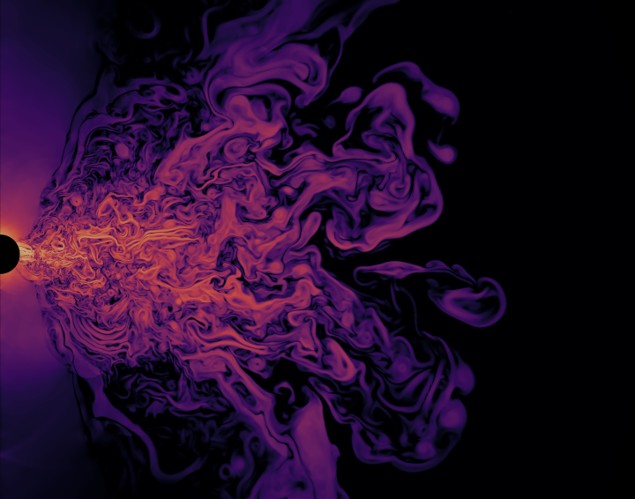

Video 1: The density around a black hole

This video is related to the image in the header of this user story.

The image in the header illustrates the simulated spatial distribution of plasma density around a black hole (on the left, in black). We can appreciate the highly turbulent nature of the plasma flow, as well as the presence of two clean “jets” of plasma around the polar regions.

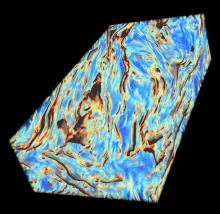

Video 2: Magnetic field in the disk

This video is related to Image b and shows the magnetic field in the disk.

Relevant papers:

Since 2022, Fabio Bacchini has been an Assistant Professor at the Centre for mathematical Plasma Astrophysics (CmPA) of KU Leuven, in Belgium, where he also obtained his PhD. In his research, he actively develops, codes, and applies numerical techniques for magnetohydrodynamic and particle simulations of Newtonian and (special/general) relativistic plasmas in astrophysics. He uses the world's most advanced supercomputers to run those simulations across hundreds of thousands of CPUs. Together with his research group, he aims to push the boundaries of the state of the art in theoretical astroplasma physics.

More info: https://fabsilfab.wixsite.com/home